Summary

In summer 2022, we conducted a contest on the EA Forum. Authors could submit Forum articles, we judged all of them, and finally we awarded prizes to the top entries. We were delighted with the quality of the top submissions, but were slightly disappointed with the counterfactual effect that our contest had.

Setup

We launched the first version of our online platform for impact markets in May 2022. We wanted to conduct a first live test of the MVP of our platform, get real users interested in it, and test the basic mechanisms of impact markets.

For legal reasons, we omitted one key feature of impact markets, which is the (prospective) seed investment. Seed investments are important for the functioning of impact markets, because they convey that the impact is counterfactually valid. (Issuers would not sell shares to seed investors at low valuations if they didn't have to.) Consequently we expected and explicitly allowed contestants to submit articles that they’d already published up to one month before the start of the contest, which ran for another month.

Specifically, we allowed one month (May) for the submission of existing entries and another month (June) to write and submit fresh entries. We expected to see a (fairly even) 60-40 split between pre-existing articles and fresh articles among our submissions. (This does not imply that our context contest was causal in incentivizing the fresh articles, but we think it was for other reasons.)

Evaluation

Going into the contest we expected to have three problems: (1) how to compare the posts to one another, (2) how to translate this comparison into a valuation, and (3) how to size our offers so that they just exhaust our budget. Our plan was to use the Utility Function Extractor for the first problem, set the valuation according to what we thought we would otherwise have to pay in terms of monetary and time costs to contract someone to write a post as good and impactful as some of our top posts, and then offer to buy a fixed size from all authors such that the prices add up to our budget.

I, Dawn, at least, was surprised that when I used the Utility Function Extractor, my thought process was always like, “How much more do I value C over B? Well, I valued B at 10A and I value C at 100A, so it seems I value C at 10B.” That defeats the purpose of the tool, which is designed to elicit approximate factors like that. In practice, though, I already had a clear (though highly imprecise) idea of the factor. So I, at least, stopped using the tool and entered my factors directly into a spreadsheet. Finally we normalized our evaluations and averaged them.

Setting the valuations was a bit tricky. We didn’t want to insult the authors by low-balling them too much, but we also didn’t want to pay more than we have to. We turned to the budgets and outputs of early Rethink Priorities to form an intuition for what a reasonable valuation might be. If in doubt, we erred a bit on the lower side, expecting to be bargained up.

Finally we found that 20% was the size at which the costs (roughly) exhausted our budget, so we made offers to buy 20% (or 20,000 shares) each at the given valuation.

Note that we do not think that retrofunders on our markets need to be transparent about their processes! That should be completely optional. If a retrofunder thinks a project is worth $10 million, they should be able to bid $1 million and make a bargain if the sellers accept. That’s how impact markets make philanthropic funding cheaper for foundations.

Results

As it turned out, only 3 of our 20 valid submissions were fresh articles. In these particular cases, we are fairly sure that we have causally influenced their creation.

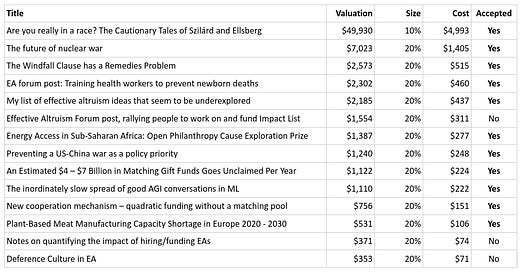

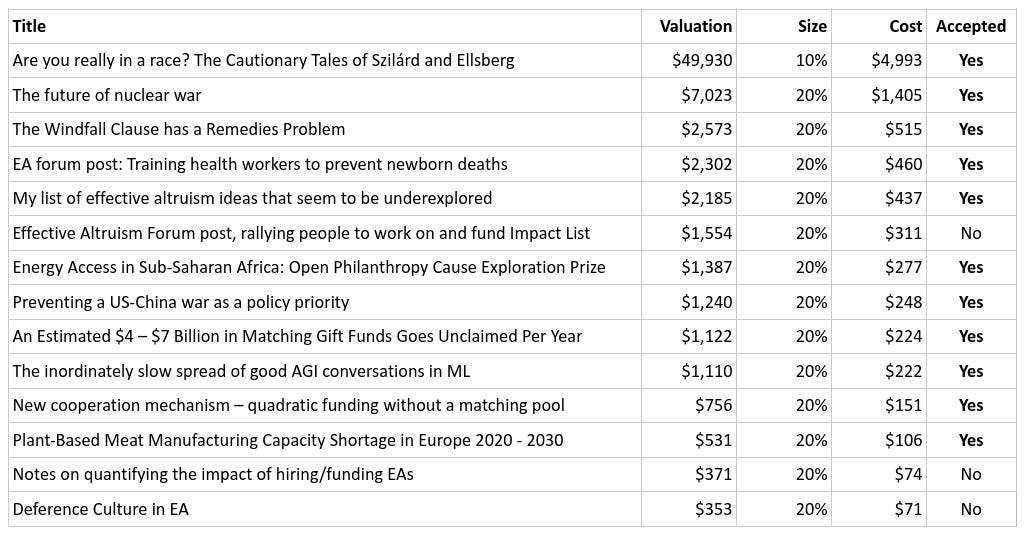

The following is a breakdown of all the articles that we decided to reward, the size of the reward, and whether the author accepted our offer. Further details can be found on our platform. The shares that we’ve bought are now owned by us and Austin Chen/Manifold Markets, split 50-50.

Substack doesn’t support tables, and you can’t click links in images, so here’s the list of certificates:

Are you really in a race? The Cautionary Tales of Szilárd and Ellsberg

EA forum post: Training health workers to prevent newborn deaths

My list of effective altruism ideas that seem to be underexplored

Effective Altruism Forum post, rallying people to work on and fund Impact List

Energy Access in Sub-Saharan Africa: Open Philanthropy Cause Exploration Prize

An Estimated $4 – $7 Billion in Matching Gift Funds Goes Unclaimed Per Year

The inordinately slow spread of good AGI conversations in ML

New cooperation mechanism - quadratic funding without a matching pool

Plant-Based Meat Manufacturing Capacity Shortage in Europe 2020 - 2030

We made all of our offers soon after the judging was complete (mostly in August and September). It's only this postmortem that we, sadly, procrastinated on.

Takeaways

Only 15% rather than 40% of the submissions were counterfactually valid. This was a strong update for me that some mechanism is needed that differentially incentivizes counterfactually valid impact. Our go-to solution to this problem are seed investments. (Though perhaps there are others.) I think they are crucial for the counterfactual effect of impact markets. I would even go so far as to say that one of the key advantages that impact markets (with seed investments) have over classic prize contests is that they provide access to seed funding. Seed funding conveys to the funder that the impact was counterfactually valid and empowers charity entrepreneurs who would not otherwise be able to test their ideas.

I was positively surprised though that most of the authors accepted our offers. I tried to make fair offers that didn’t exceed what I thought we would have to pay in terms of time and money to find and contract someone to write an article of comparable quality and impact focus. (Because otherwise impact markets don’t add value.) One author successfully renegotiated the offer so that it’s somewhat higher than this benchmark. Our previous experience (based on a smaller sample) had been that authors were bullish enough on the long-run value of their work that they were loath to sell anything at all at such fundamentals-based valuations.

On the safety side, I didn’t encounter any surprises. We spent a few extra hours thinking about the safety of one article, but ultimately concluded that it is likely positive in expectation. There was a second article where I was unsure about the sign of its effect, and where I was leaning slightly on the negative side, but not to any catastrophic extent. We decided to exclude the second article from retroactive rewards. Two more articles were invalid because they had been published outside the contest period. One author stated already in her submission that she would not sell the article, so we didn’t contact her with our offer. In other cases we valued articles at positive prices that seemed too small to us to warrant the transaction costs.

Going forward we would like to partner with professional funders that run prize contests. We are also considering building momentum by first concentrating on our services for donors and the recipients of donations, but that is a topic for another article.